Interface Koto

Interface koto generates animation from koto sounds.

SUMMARY

A. 3 types of sensors receive koto performance information:

- Piezo sensors on 3D-printed koto bridges

- An IR sensor for the distance between the edge of the koto and the right hand

- A MYO for left-hand movements

B. Animation is generated by the signals sent from the sensors and created by Processing

C. The animation generates additional sounds/noises

Performed on May 5th, 2016 at Digital Arts Expo

WHAT IF PLAYING A MUSICAL INSTRUMENT CAN DO MORE THAN CREATING MUSIC?

If some interfaces can allow a musical instrument to create more audio and visual artwork while producing its regular music, could it be a new approach and understanding of a musical instrument, like a multi-sensory instrument? This is how this project started. This project builds an interface system that is attached to a real acoustic instrument and also considers the performability; the performer should still be able to play the instrument as usual when the interface is attached.

Regarding the performability, less stress to the performer is important. Playing music means that the performer communicates with the audience through the instrument. When the performer can communicate smoothly with their instrument, the performer's musical ideas can be conveyed better to the audience. Therefore, points below are fundamental to the success of this project:

- The instrument can be played as a musical instrument

- The instrument is not damaged, and

- The performer does not need to give up their performance techniques when using the instrument with the interface system

A koto, Japanese zither, is the instrument selected for this project because the koto is my primary instrument.

Here I first discuss the process of selecting sensors that work well with the instrument’s physical nature. After that, the issues and solutions regarding the implementation of the interface system onto the koto is argued. Lastly, I explain how this interface koto is performed: in other words, the composition process and performance from the performer’s point of view is discussed.

SENSOR SELECTION

Three traditional performance techniques were selected for this project. The koto sound production process of each technique was carefully examined. Lastly, the sensor was selected accordingly for each technique. The selected traditional performance techniques are:

- Plucking strings

- Bending strings, and

- Scraping strings

FSR

The initial idea was to place a FSR sensor at the bottom of each bridge where a bridge touches the koto body surface, so the FSR can receive different types of pressure when the string is plucked or bent. The expectation was that the sensor receives a quick short pressure when a string is plucked, and when the string is bent, the sensor receives longer pressure, detecting the duration and the level of the pressure of a string-bending action .

This idea did not work out for two reasons. First, the relationship between the left-hand bending gesture and the pressure that the bottom of a bridge receives was not straightforward. The bottom of the bridge started receiving the pressure when the string was bent; however, the pressure stayed almost the same even after the string was released. The level of the pressure changed back when the string was plucked again. Though this is an interesting discovery between bending gesture and the pressure that the bridge receives, this shows that the data from the FSR is not related to the left-hand gestures.

The second reason is the nature of the koto body. The body of the koto is not lacquered but a raw organic wood. Therefore, the body surface is not consistently smooth. Since the positions of the koto bridges are always different based on the tuning styles, it is impossible to expect a FSR sensor to receive the same pressure in the same way from the same left-hand gesture. This fact makes it difficult to understand how the left-hand bending is performed from the FSR data.

Piezo Sensor attached on the koto bridges

Piezo

Since FSR is not an ideal sensor, Piezo was selected to detect the vibration, instead of the pressure, when the string is plucked.

MYO

As for the left-hand bending gesture, MYO (https://www.myo.com/) is selected. MYO is a wearable sensor that a person wear on their arm, and the MYO can detect orientation, acceleration, and gyroscope of X, Y, and Z axises as well as EMG data from 8 sensors surrounding the arm. The Y axis orientation is used to detect the left-hand bending gestures.

Observing the MYO orientation data

Wearing MYO

IR sensor attached to the ryukaku (the end bridge)

IR

An IR sensor is used to detect the string-scraping technique. The technique is to scratch the two strings next to each other by the right-hand index and middle fingers from the right end towards the koto bridges. The IR sensor is placed at the right end of the koto to detect the distance between the sensor and the right hand.

These data received by the sensors were sent to Processing via Arduino, and Processing generates an animation.

IMPLEMENTING WITH A REAL INSTRUMENT AND TECHNIQUES

Attaching Piezo Sensors to Koto Bridges

Piezo sensors were first attached to the original koto bridges with tapes. The primary focus of this point was not to damage the original bridges and that the sensors should be detachable.

However, it was soon revealed that the tape couldn't attach the sensors firmly enough to detect the vibration accurately. A couple of different methods were examined with different materials such as rubber bands. However, the results were not successful, and the data received were not useful.

3D-printed koto bridge prototypes. From above left to right. prototype versions 1, 2, 3, and 4

3D-Printed Koto Bridges

The solution to the problem of Piezo sensors is to 3D-print the koto bridges. In this way, the original bridges are free of damage, and the sensors do not need to be detachable. A couple of prototypes were made and tested before the design is finalized in order to meet all these criteria:

- The bridge is the same size as the original bridge

- The bottom surface of the bridge is smooth so the koto body would not be scratched by the bridges

- The top part of the bridge where the string rests is smooth as well so no extra buzz would be produced when the string is plucked, and

- There is a space to attach a Piezo sensor

The koto bridge is designed for the 3D-printer by Martín Vélez, an awesome artist!

COMPOSITION AND PERFORMANCE

Creating animation with Processing

Animation and Sounds

In this project, animation is created based on the data received from three different kinds of sensors, Piezo, MYO, and IR, which are used to detect different performance techniques: plucking, bending, and scraping strings. The sensors are tested with the koto and a performer. As the sensors are set on the koto and the performer plays the instrument, the data are observed. Based on the data received, thresholds are set, and the data are mapped in order for the numbers to be useful in Processing. The data from each technique are sent to Processing and generate different types of animation. The animation also generates manipulated koto sounds. As a result, the audience will have an audio/visual experience, including the original koto acoustic sounds.

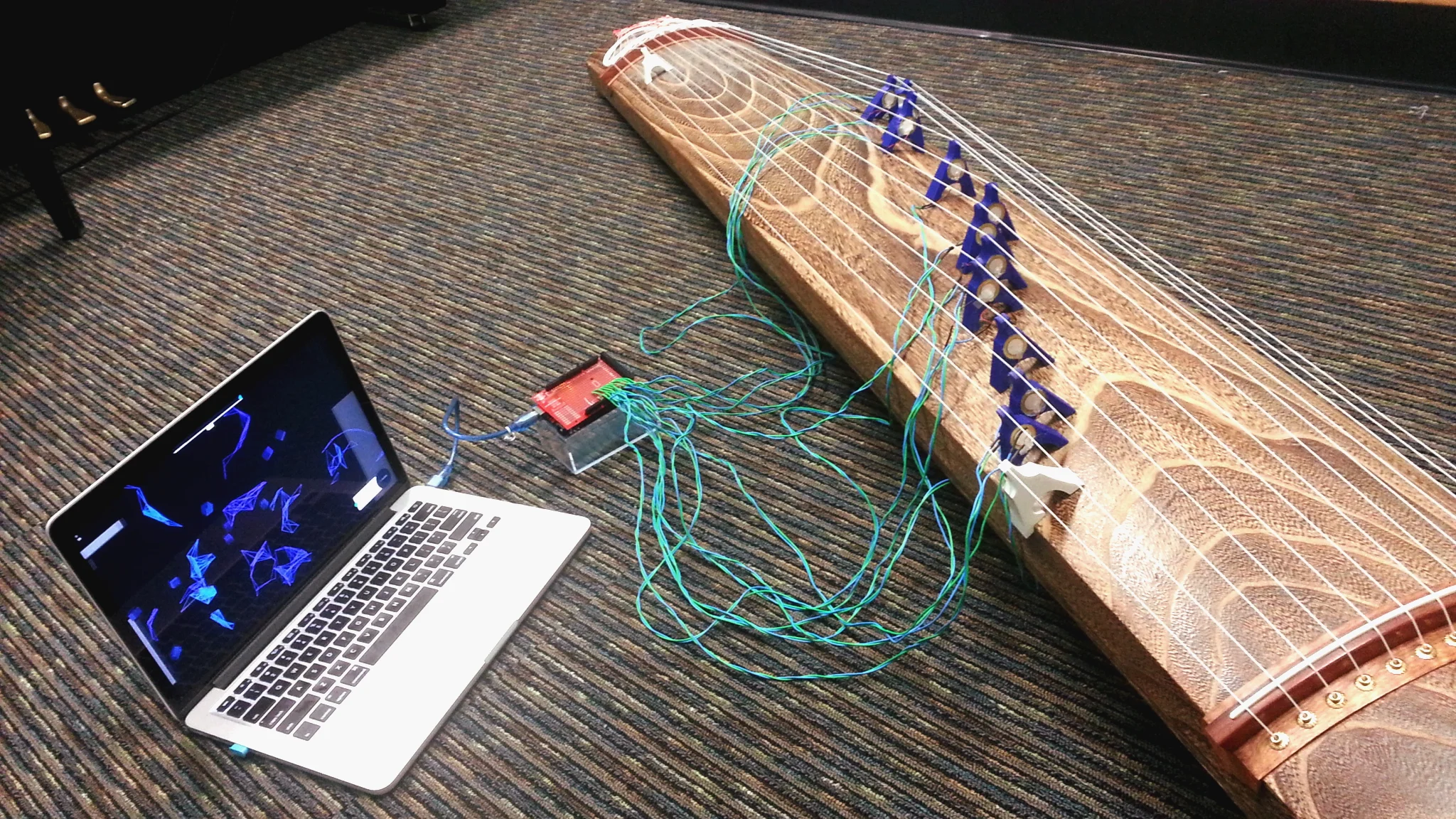

Interface connected to computer generating an animation

Playing the Instrument

From the performer’s point of view, this interface system does not require much extra caution to play the koto and interact with the interface at the same time. Therefore, the performer is able to focus on their own performance without compromising their performance aesthetics to communicate with the sensors. In this sense, the koto can be the audio/visual multi-sensory instrument with the interface while functioning as the koto as an acoustic instrument.

CONCLUSION

This interface koto project interacts with an acoustic musical instrument performance while the performer maintains the aesthetics of the instrument performance techniques. Playing music is a journey of a performer’s musical idea through their body, the instrument, and the air to the audience. The more smooth and efficient this journey is, the better the performer’s idea is communicated with the audience. The function of the interface system here is to expand the means to transmit performer’s ideas without disturbing the musical process.

This project achieved this goal with various technologies such as sensors, Arduino, Processing, and 3D-printing, creating a multi-sensing experience along with listening to acoustic traditional koto sounds. This success was based on a number of close and careful observations of koto techniques, sensor selection, and the data received from performances when the interface and the koto were connected.

The project was also successful in a way that the performer did not need to make extra efforts to familiarize themselves to the interface system. Such new effort is itself exciting, and this can be an interesting point to explore for the next project. For this particular one, however, the focus was to have the performer play as usual, or set a human being at the center, and to have the interface system built around. This system did work out in that way.

This time, the koto performer created animation through Processing. However, this interface enables the koto performance to do many things depending on artistic ideas and the ways the interface is connected and the data is used. This interface can limitlessly expand the idea of what kind of, and how, art can be created by playing the koto.

Future research may be done in the analysis of other koto performance techniques to develop a more comprehensive koto interface system.